Generative Engine Optimization Checklist for 2026

The ONLY checklist you need for generative engine optimization

Your brand might rank on page one and still be completely invisible where millions of searches now end: inside an AI-generated answer.

Since Google launched AI Overviews broadly in May 2024 and OpenAI introduced ChatGPT Search in October 2024, the interface between a question and your content has fundamentally changed.

Gartner predicted a 25% drop in traditional search engine volume by 2026, and most B2B brands, founders, and lean marketing teams haven’t updated their playbook to match.

Generative engine optimization (GEO) is the practice of structuring content so that AI-powered answer engines (including ChatGPT, Perplexity, Gemini, and Google AI Overviews) can retrieve, cite, and surface it accurately.

Unlike traditional SEO, which targets blue-link rankings, GEO measures success by citation rate, brand mention share, and inclusion in synthesized answers. Research from Princeton found that targeted GEO interventions increased source visibility in generative responses by up to 40%.

This generative engine optimization checklist covers every layer that determines whether AI engines ignore or cite you: technical access, content structure, authority signals, brand mentions, measurement, and the avoidable mistakes keeping your brand out of the answer.

TL;DR

Generative engine optimization improves whether your brand is retrieved, cited, and summarized in AI answers, not just where it ranks in traditional search.

The highest-priority GEO fixes are crawlability, answer-first content structure, valid structured data, and stronger authority signals.

AI visibility depends on what your brand publishes on its own site and how consistently it is mentioned across trusted third-party sources.

A practical GEO measurement framework tracks citations, brand mentions, AI referral traffic, and summary accuracy rather than rankings alone.

Content that does not earn AI citations is increasingly content that does not perform in modern search.

What Is a Generative Engine Optimization Checklist?

Generative engine optimization (GEO) is the practice of making your content easier for AI systems to crawl, understand, retrieve, and cite in generated answers.

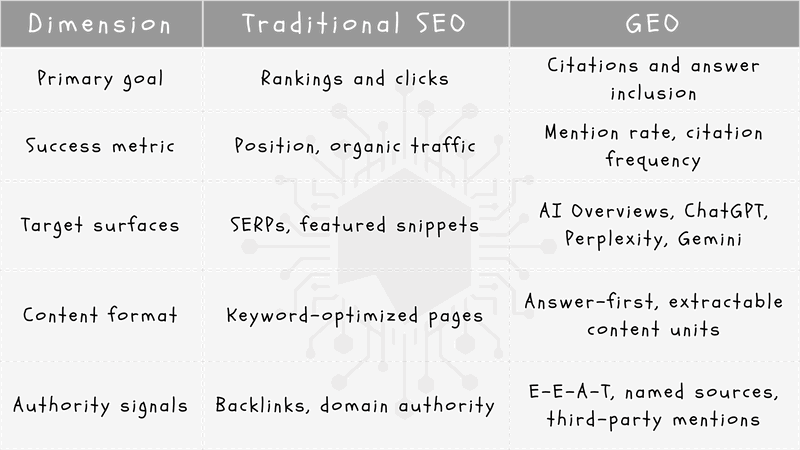

Where traditional SEO targets rankings and clicks in blue-link results, GEO targets citations, mentions, and inclusion in the answer layers produced by ChatGPT, Google AI Overviews, Perplexity, Gemini, and Copilot.

That distinction matters more than it might sound. A page can rank on page one and still be completely absent from every AI-generated answer your buyers see.

GEO does not replace SEO; it builds on it. Crawlability, helpful content, internal linking, and trust signals remain the foundation. Without those fundamentals in place, no GEO tactic will move the needle. Think of GEO as the layer you add once the basics are solid.

A Princeton-led research team found that targeted GEO methods increased source visibility in generative engine responses by up to 40%, with the strongest gains coming from adding statistics, quotations, and clear source cues.

Meanwhile, Google confirms there is nothing special creators need to do for AI features beyond following regular Search guidance and Search Essentials, which means the brands already executing SEO well have a head start.

SEO vs. GEO at a glance:

The generative engine optimization checklist in this guide functions as an execution tool rather than a strategy document. It tells your team exactly where you are losing AI visibility right now, before you invest another dollar in content production.

Checklist #1: Make Your Site Crawlable for AI Search

Every tactic in this generative engine optimization checklist depends on one prerequisite: AI crawlers must be able to reach your content. If they cannot, nothing else in this guide moves the needle.

Start with your robots.txt file. AI search platforms operate their own distinct bots. OpenAI documents separate crawlers for different functions, including OAI-SearchBot for its search features, and allows site owners to manage access through robots.txt. Accidentally blocking these bots (or applying overly broad Disallow rules) removes your pages from consideration before any content quality factor comes into play.

Microsoft’s Bing Webmaster Guidelines reinforce the same principle: standard technical search hygiene is foundational for Copilot and Bing discoverability. The platforms differ in interface; the crawl requirements do not.

On the content-rendering side, prioritize server-side-rendered HTML for your most important pages. Key information buried inside JavaScript that requires client-side execution may never be read by AI crawlers. Similarly, content locked behind logins or paywalls is effectively invisible to answer engines. If visibility is your goal, that content needs to live in the open.

llms.txt is an optional file that helps organize site context for language models, pointing them toward your most relevant content. Implementation is worth considering, but solid technical search hygiene and crawlable HTML still do the heavy lifting.

Run through this mini-checklist before moving to any other GEO step:

robots.txt review: Confirm AI-related crawlers are not blocked unintentionally

Sitemap freshness: Ensure your XML sitemap reflects current, indexed pages

Render check: Verify critical content is visible in raw HTML, not JavaScript-dependent

Crawl log review: Look for bot errors, blocked paths, or missed pages

Page-speed sanity check: Slow load times can limit crawl depth on large sites

Fix access first, because everything else builds on this foundation.

Checklist #2: Structure Content So AI Can Extract Answers

AI systems are built to extract discrete, usable answers, not to wade through long introductions and dense blocks of marketing copy. If your content structure for AI buries the key point in paragraph four, generative engines will often skip it entirely or pull from a competitor who answered directly.

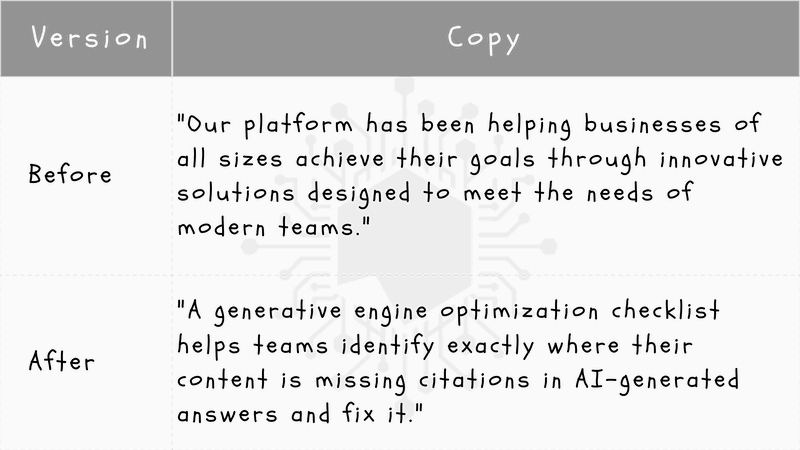

The fix is answer-first writing: open every major section with a 1–3 sentence direct answer, then follow with context, evidence, and elaboration. This mirrors how retrieval-augmented generation systems scan for relevant passages to surface in responses.

Formatting rules that consistently appear in cited content:

Question-based headings: phrase H2s and H3s as the exact questions your audience types

Short paragraphs: 2–4 sentences maximum; one idea per paragraph

Bullet points and numbered steps: break processes and lists out of prose

Comparison tables: ideal for “X vs. Y” queries where AI engines need structured contrast

Concise summaries: restate the core point at the end of long subsections

Add a visible quick answer block near the top of the article and at the start of key subsections. This gives AI engines a clean, quotable unit without requiring them to synthesize meaning from surrounding paragraphs.

The research backs this approach. Aggarwal et al. at Princeton found especially strong visibility gains from adding quotations, statistics, and clearer source cues to content (all of which depend on structured, extractable formatting). Google’s helpful content guidance reinforces the same principle: reliable, people-first content is the foundation of search visibility, whether that’s a blue link or an AI citation.

Before and after example:

Checklist #3: Target Conversational Queries and Intent Clusters

AI search doesn’t wait for you to rank for a broad head term. When someone types a natural-language prompt into ChatGPT Search or Google AI Overviews (both now mainstream realities since Google’s broad U.S. rollout in May 2024 and OpenAI’s ChatGPT Search launch in October 2024), the engine retrieves sources that directly answer the question. If your content is built around short, generic keywords for SEO optimization rather than real buyer questions, you’re invisible in that layer.

Shift from head terms to intent clusters. Every topic you cover should map to a cluster of conversational, high-intent questions rather than a single broad phrase. Build four query types into each cluster:

Definitional: “What is [topic]?”

Comparison: “[Option A] vs. [Option B]: which is better for [use case]?”

Problem-solution: “How do I fix [specific problem]?”

Evaluation: “Is [product/approach] worth it for [context]?”

These long-tail queries match how buyers actually phrase prompts in AI interfaces, and they give each page a clear retrieval target.

Mine the right sources for real questions. Don’t guess at phrasing. Pull questions from:

Google’s People Also Ask panels

Sales call transcripts and CRM notes

Support tickets and live chat logs

AI follow-up prompts (ask ChatGPT or Perplexity a seed question, then capture every suggested follow-up)

These sources surface the exact language your buyers use, which is the language AI engines are trained to recognize and answer.

Keep one primary entity per page. Each page should own a single topic or entity, with related entities supported through internal links to dedicated pages. Diluting a page across five loosely connected subtopics weakens its retrieval signal for any one of them.

Use this four-step workflow to execute:

Collect: gather 20–30 raw questions from the sources above

Group by intent: sort into definitional, comparison, problem-solution, and evaluation buckets

Map to pages: assign each group to an existing or new page; avoid splitting one intent cluster across multiple URLs

Rewrite headings: replace vague H2s with the user’s exact phrasing, such as “How does [X] work?” instead of “Overview of [X]”

Matching your headings to user phrasing is one of the fastest wins in conversational search optimization. It signals to AI retrieval systems exactly which question your content answers.

Checklist #4: Add Authority Signals, Sources, and E-E-A-T

AI systems don’t just retrieve relevant text; they evaluate whether a source is credible enough to cite. LLM-optimized SEO requires you to think beyond topical coverage and actively demonstrate trustworthiness at every layer of your content.

Google’s people-first content guidance frames this clearly: helpful, reliable content backed by strong trust signals is the foundation for visibility.

That framing maps directly onto E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness), the framework AI systems use, implicitly or explicitly, when deciding which sources to surface.

What AI engines look for in a credible source:

Named authors with visible credentials, professional bios, and links to their broader work

Reviewer or editor credits on technical or medical content where subject-matter verification matters

Organization transparency: a clear About page, Contact page, and consistent brand identity across the site

First-hand experience: product walkthroughs, original test data, or direct case examples that signal the author was actually there

Generic recycled claims don’t earn citations. Instead, use named sources, recent statistics, and expert quotes linked directly to primary research.

When you have original data, proprietary benchmarks, or firsthand product experience, lead with it. Unique evidence is more citation-worthy than a summary of what others have already published.

The Princeton GEO research confirmed this directly, reporting stronger trusted citation effects when content included quotations, statistics, and explicit source cues rather than unsupported assertions.

Authority signal checklist for every key page:

Author Credentials: Named author with a linked bio and visible credentials.

Verification: Reviewer or editor credit where applicable.

Recency: Publish date and last-updated date displayed on the page.

Citations: At least two primary-source citations with working links.

External Proof: Supporting references from recognized institutions or publications.

Transparency: Clear About and Contact pages accessible from site navigation.

Run this checklist against your highest-priority pages before moving to the next step.

Checklist #5: Use Structured Data and Clear Entity Signals

Structured data is not a GEO-specific tactic; it’s a foundational signal that helps search systems understand what your page is about, who created it, and how it relates to other entities.

As Google Search Central confirms, no special technical markup is required for AI features beyond existing search guidance. What standard schema markup does is give search systems a machine-readable layer that clarifies content types, entity relationships, and organizational identity.

When valid structured data matches what’s visibly on the page, it reduces ambiguity for both traditional crawlers and AI retrieval systems. The operative word is matches.

The schema must reflect real, visible on-page content, and marking up claims, credentials, or FAQs that don’t appear in the actual text is a misuse of the standard.

Google’s structured data documentation is explicit that valid markup helps search understand content types and relationships, not substitute for them.

Best schema types for GEO checklists:

Article: for editorial content, guides, and how-to posts

Organization: establishes your brand name, logo, URL, and contact details

Person: connects named authors to their credentials and published work

FAQPage: marks up question-and-answer pairs that AI systems frequently extract

HowTo: structures step-by-step processes for direct retrieval

Product: supports commercial pages with clear names, descriptions, and review data

Review / AggregateRating: adds credibility signals to product and service pages

Beyond schema, entity clarity matters just as much. Use your brand name consistently across every page, your About page, author bios, and any third-party profiles.

Internal links should reinforce topic relationships (connecting your author bio to their articles, your Organization page to your core content hubs, and your product pages to supporting guides).

Inconsistent naming, missing author identity, or orphaned pages all create entity ambiguity. AI systems build understanding from patterns across your site.

The cleaner and more consistent those patterns are, the easier it is for a generative engine to accurately represent your brand.

Checklist #6: Build Brand Mentions Beyond Your Website

Your owned content is only one input. AI engines learn about brands from across the entire web.

Training data, indexed pages, community discussions, editorial sources, and third-party directories all shape how a model understands who you are and whether you’re worth citing.

If your brand exists only on your domain, you have a weak entity footprint, and AI systems will treat you accordingly.

This is one of the most common reasons brands are invisible in AI search. Strong on-site content matters, but without third-party citations and off-site brand mentions, you lack the corroborating signals that build entity authority in a generative engine's eyes.

Where off-site mentions matter most:

Review platforms: G2, Capterra, Trustpilot, and category-specific review sites are indexed and frequently referenced

Community threads: Reddit is particularly significant; OpenAI’s GPT-3 research cited a Reddit-linked dataset as a meaningful component of training data, making authentic participation in relevant subreddits a legitimate visibility tactic

Industry publications: editorial coverage, contributed articles, and analyst roundups carry high credibility signals

Reputable list articles: “best of” and comparison posts in your category are frequently cited by AI engines when answering evaluation queries

Partner directories and podcasts: consistent brand naming across these surfaces reinforces entity clarity

Prioritize mentions where your target buyers already research solutions. Niche communities and credible editorial sources outperform generic press releases every time.

As Reuters has documented, earned visibility beyond owned channels is becoming a material competitive factor in the AI search era.

Mention-building tactics your team can run:

Expert commentary: pitch your subject-matter experts to journalists and industry newsletters

Original research PR: proprietary data earns citations because it gives writers something unique to reference

Founder quotes: named, attributed quotes in third-party articles build both brand mentions and E-E-A-T signals simultaneously

Directory optimization: claim and complete every relevant listing with consistent brand naming

Community participation: answer questions genuinely in forums and niche Slack groups where buyers are active

Brand discoverability in AI search is a function of how widely and consistently your brand is referenced across credible, independent sources.

Digital marketing and search engine optimization have always rewarded authority; GEO simply makes the off-site dimension of that authority more visible and more measurable.

Checklist #7: Measure GEO Performance With Citation-Focused KPIs

AI visibility tracking requires a different measurement model.

Traditional SEO metrics (rankings, organic sessions, click-through rate) were built for a link-based search world. AI answer visibility is often zero-click and citation-based.

Bain found 80% of consumers rely on zero-click results in at least 40% of their searches, meaning your brand can appear in a generated response without producing a single trackable pageview.

If you only measure what your existing dashboard shows, you are measuring the wrong thing.

The core GEO KPIs to track

Define your baseline around these six metrics before making any major content changes:

AI citation frequency: How often your URLs or brand name appear as cited sources across target prompts

Brand mention share: Your brand’s presence in AI answers relative to competitors on the same queries

AI referral traffic: Sessions arriving from platforms like ChatGPT Search (which now surfaces linked publisher citations, per OpenAI) and Perplexity

Query coverage: The percentage of your 10–20 priority prompts where your brand appears at all

Summary accuracy: Whether AI-generated descriptions of your product or service are factually correct

Downstream conversions: Revenue or lead actions from sessions that originated via AI referral

The manual workflow for small teams

You do not need an enterprise tool to start. Run this process monthly:

Choose 10–20 priority prompts that reflect real buyer questions in your category

Test each prompt across ChatGPT, Perplexity, Gemini, and Google AI Overviews

Log whether your brand is mentioned, whether a URL is cited, and which competitor appears instead

Record results in a simple spreadsheet with a date column so you can track movement over time

Industry platforms have begun adding AI Overview and answer engine reporting precisely because the market has shifted from ranking-only measurement to citation and mention monitoring (Semrush).

Even basic manual logging puts you ahead of teams still relying solely on position tracking.

Segment and baseline before you optimize

Where your analytics platform allows, segment traffic by referral source to isolate AI-driven sessions. Keep that baseline intact before launching new content initiatives; it is the only way to measure whether your GEO changes are working.

The core principle here is direct: you cannot win in AI search without first knowing where you are losing. Citation-focused KPIs turn that unknown into a repeatable measurement practice.

Checklist #8: Avoid the GEO Mistakes That Keep Brands Invisible

You’ve now worked through every step of this generative engine optimization checklist: crawlability, structure, query targeting, authority, schema, off-site mentions, and measurement.

This final step is about protecting that work by naming the errors that quietly undo it.

The most common GEO mistakes teams make:

Blocking AI crawlers: accidentally excluding search-related bots through overly broad

robots.txtrules or restrictive preview controlsHiding content in JavaScript: burying key answers in client-side rendering that AI systems can’t reliably extract

Publishing generic AI-written fluff: thin, unsourced content that adds no unique evidence and gives engines nothing citation-worthy to surface

Skipping citations and sources: making claims without linking to primary research, data, or named experts

Ignoring content freshness: leaving outdated pages unrefreshed when AI systems favor current, accurate information

Measuring only rankings: tracking blue-link position while missing citation rate, mention share, and AI referral traffic entirely

Google advises following Search Essentials and helpful content guidance rather than chasing shortcuts, and that guidance applies directly to these mistakes. There’s no workaround for thin content or blocked crawlers.

Why publishing more content without fixing fundamentals wastes budget. Lean teams often respond to low AI visibility by producing more pages. Volume without structure, entity clarity, or third-party authority doesn’t move the needle.

AI engines don’t reward quantity; they cite sources that are accessible, credible, and clearly organized. More content built on a broken foundation just scales the problem.

With Google AI Overviews now live in more than 100 countries and territories, these aren’t edge-case concerns. They’re mainstream visibility losses happening on a global scale.

Priority recap (fix in this order):

Access first: confirm crawlers can reach your most important pages

Structure second: reformat content for extractable, answer-first clarity

Authority third: build E-E-A-T signals and earn third-party mentions

Measurement fourth: track citation KPIs, not just rankings

If you’re not sure where your brand is losing visibility across ChatGPT, Perplexity, Gemini, and AI Overviews, an AEO Audit gives you a clear, platform-by-platform picture of exactly where you’re absent and what to fix first.

Frequently Asked Questions

How do you do generative engine optimization?

Generative engine optimization (GEO) is achieved through a multi-layered approach:

Technical Access: Ensure pages are fully crawlable and indexable by AI bots.

Content Structure: Use answer-first formatting and clear headings.

Authority: Include trustworthy citations and strong E-E-A-T signals.

Entity Clarity: Establish clear brand and author signals for AI extraction.

Research from Princeton University found that these targeted interventions can increase source visibility by up to 40%.

Does GEO replace SEO in 2026?

Generative engine optimization does not replace SEO; it builds directly on core SEO fundamentals, including crawlability, technical site health, and helpful content.

The primary shift is in how success is measured: GEO expands the definition of visibility to include citations, brand mentions, and inclusion in AI-generated answers beyond blue-link rankings.

Google’s own guidance confirms this continuity, stating that sites should follow existing Search Essentials rather than pursue separate AI-specific optimization.

Teams that neglect traditional SEO foundations will find GEO performance suffers as a direct consequence, since AI retrieval systems depend on the same crawl access and content quality signals that search engines have always required.

What is LLM optimization?

LLM optimization is the practice of structuring content so that large language models can accurately interpret, retrieve, and reference it when generating answers.

In practical terms, this means writing in clear, direct language, leading with explicit answers, supporting claims with verifiable facts, and establishing strong signals of source credibility.

Because systems like ChatGPT Search (now reaching over 900 million weekly active users) and Google AI Overviews pull from indexed web content, technical accessibility (including proper bot permissions and crawlable page structures) is a prerequisite for any LLM optimization effort.

The discipline overlaps significantly with GEO but places particular emphasis on how language models process and represent information rather than on traditional ranking signals alone.

Do I need special schema markup for AI search?

Google has explicitly stated that no special technical markup is needed beyond existing search guidance.

Standard structured data types (including Article, Organization, Person, FAQPage, HowTo, and Product) can still help search systems understand content and named entities more precisely when implemented accurately.

According to Google Search Central, valid and relevant structured data may indirectly support inclusion in AI-powered search experiences by improving how systems interpret page context.

The priority should be ensuring existing structured data is correct and complete, rather than searching for a schema type designed exclusively for generative engines.

How do I know if my brand is invisible in AI search?

The most direct method is to manually test 10 to 20 high-value prompts in ChatGPT, Perplexity, Gemini, and Google AI Overviews. Log whether the brand appears, how it is described, and which competing sources are cited instead.

If competitors are consistently cited and the brand is absent across multiple platforms, AI visibility is weak regardless of traditional search rankings.

This gap typically signals problems with crawl access, insufficient off-site authority, or content lacking the factual specificity that AI retrieval systems favor.

Tracking this data on a recurring basis (rather than as a one-time audit) is necessary because AI systems update their retrieval behavior as new content is indexed and model weights change.

How often should I update content for GEO?

High-value pages should be reviewed quarterly at a minimum. Topics where facts, statistics, or industry conditions change quickly need faster review cycles.

Regular updates preserve factual accuracy, strengthen content freshness signals, and increase the likelihood that AI systems will continue to retrieve and cite the page over time. Outdated or factually stale content is a liability in generative search.

AI systems can surface inaccurate summaries if the underlying source has not been maintained.

Content updates should be substantive (correcting outdated claims, adding new evidence, and improving structural clarity) rather than superficial edits made solely to refresh a publication date.

What is the biggest mistake companies make with generative engine optimization?

The biggest mistake is publishing more content without first fixing foundational problems with crawlability, page extractability, and off-site authority. Increasing content volume on a site that AI bots cannot reliably access typically raises production costs.

Without credible external recognition, citation visibility in AI-generated answers won’t improve. OpenAI’s documentation on its crawlers confirms that bot access and site permissions directly govern ChatGPT Search eligibility.

Technical barriers upstream will neutralize even well-written content. Companies achieve better GEO outcomes by auditing and resolving foundational issues first, then scaling content production from a technically sound and authoritative base.

Comments

No comments yet. Be the first to comment!